Normal Noise Fault

Define Fault

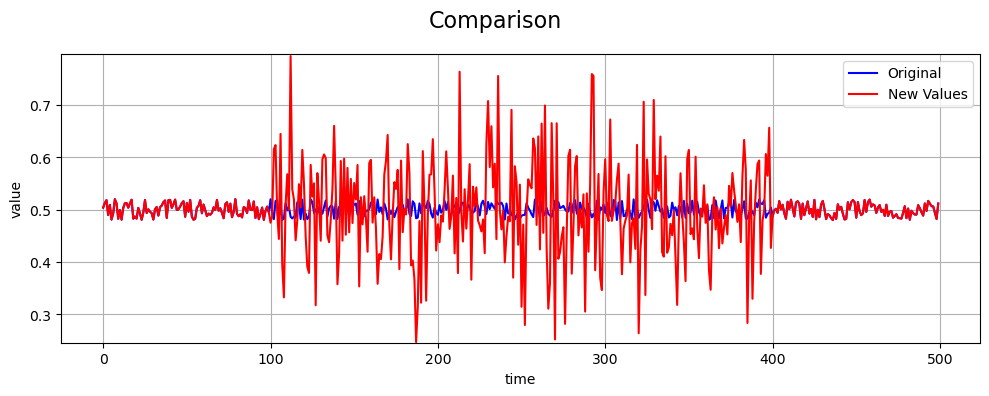

A normal noise fault models a sensor degradation where measurements are corrupted by random noise drawn from a normal (Gaussian) distribution. Unlike deterministic faults such as bias or drift, this fault introduces stochastic fluctuations that reduce signal quality without introducing a systematic offset.

Let \(\epsilon_i \sim \mathcal{N}(\mu, \sigma^2)\) denote the additive noise applied to the sensor signal during the fault interval. The parameters \(\mu\) and \(\sigma\) represent the noise mean and standard deviation, respectively.

Normal noise faults commonly arise from electromagnetic interference, thermal noise, vibration, or partial hardware degradation.

Math Behind Fault

Assume a univariate time series of true sensor values:

True signal: \(x_i\), for index \(i = 0, 1, ..., N-1\)

Fault start index: \(s\)

Fault end index: \(e\)

Linear Normal Noise Model

The observed (faulty) signal \(y_i\) is defined as:

The noise term is independently sampled at each time step:

By default, the noise parameters are:

Key Takeaway

Normal noise faults degrade signal quality by increasing randomness rather than shifting the signal systematically.

Example

An example of a normal noise fault compared to the true values is shown below:

References

NumPy normal random generator: https://numpy.org/doc/stable/reference/random/generated/numpy.random.normal.html