Offset Fault

Define Fault

An offset fault models a sensor error in which a constant additive bias is applied to the measured values over a specific time window. Unlike drift, which accumulates gradually, an offset fault introduces an instantaneous and fixed deviation from the true signal.

Let \(b\) denote the offset magnitude applied during the fault interval. The offset is defined relative to the signal scale as

where \(r \in \mathbb{R}\) is a dimensionless offset rate.

Offset faults commonly arise from calibration errors, sudden environmental changes, or sensor misalignment.

Math Behind Fault

Assume a univariate time series of true sensor values:

True signal: \(x_i\), for index \(i = 0, 1, 2, ..., N-1\)

Fault start index: \(s\)

Fault end index: \(e\)

Linear Offset Model

The observed (faulty) signal \(y_i\) is defined as:

This represents a constant additive bias during the fault window.

Key Takeaway

Offset faults shift the average signal value while leaving the variance unchanged.

Example

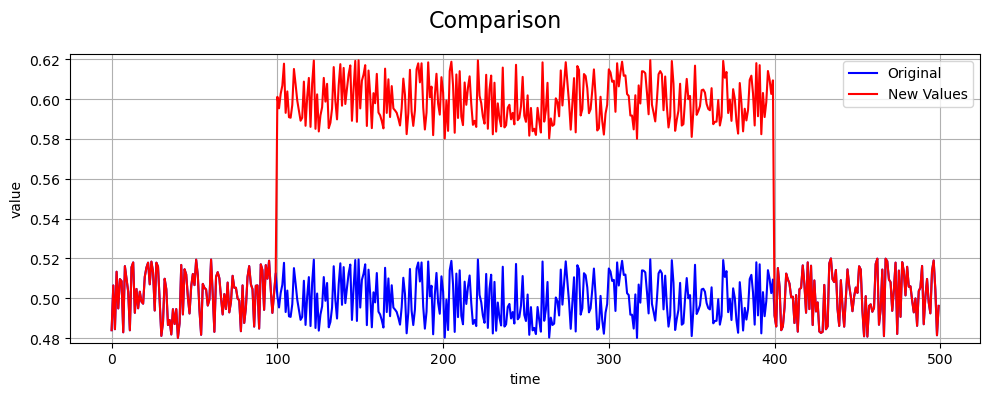

An example of an offset fault compared to the true values is shown below: