Uniform Noise Fault

Define Fault

A uniform noise fault models a sensor degradation in which measurements are corrupted by random noise drawn from a uniform distribution. Unlike normal (Gaussian) noise, uniform noise introduces equally likely fluctuations within a bounded range.

Uniform noise faults can arise from quantization effects, sensor resolution limits, or low-level interference.

Let \(\epsilon_i \sim \mathcal{U}(a, b)\) denote the additive noise applied during the fault interval. By default, the bounds \(a\) and \(b\) are defined relative to the signal scale as

where

Math Behind Fault

Assume a univariate time series of true sensor values:

True signal: \(x_i\), for index \(i = 0, 1, 2, \ldots, N-1\)

Fault start index: \(s\)

Fault end index: \(e\)

Linear Uniform Noise Model

The observed (faulty) signal \(y_i\) is defined as:

where \(\epsilon_i\) is independently sampled from the uniform distribution \(U(a, b)\).

Key Takeaway

Uniform noise faults degrade signal quality by increasing randomness while preserving the mean.

Example

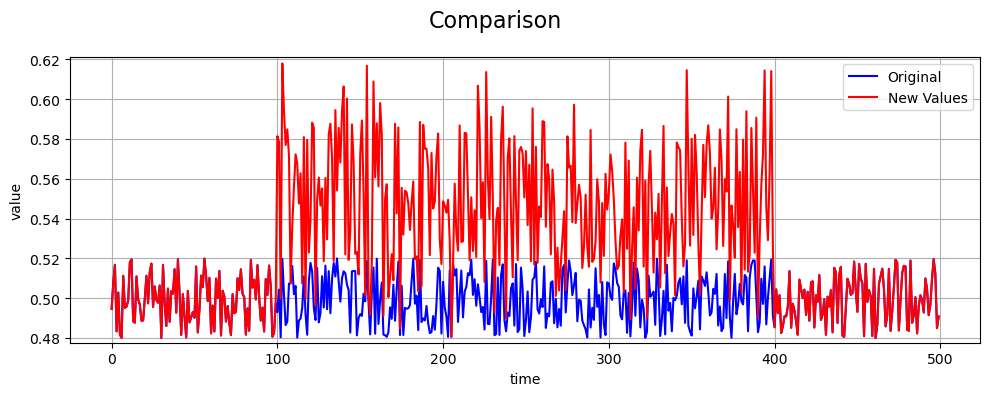

An example of a uniform noise fault compared to the true values is shown below:

References

NumPy uniform random generator: https://numpy.org/doc/stable/reference/random/generated/numpy.random.uniform.html